Self-Hosted AI: From 8B to 30B on the Same €180 GPU

By Opteia

Six weeks ago, we published our guide to running AI locally on a Tesla P40 — a used server GPU that costs about €180. The setup ran Llama 3 8B: a solid general-purpose model, but limited in reasoning depth and completely unable to process images.

Today, that same GPU runs Qwen3-VL-30B-A3B: a 30-billion parameter model with vision support and a 256K token context window. Same hardware. No new spend.

Here’s how we did it, and what it means for anyone considering self-hosted AI.

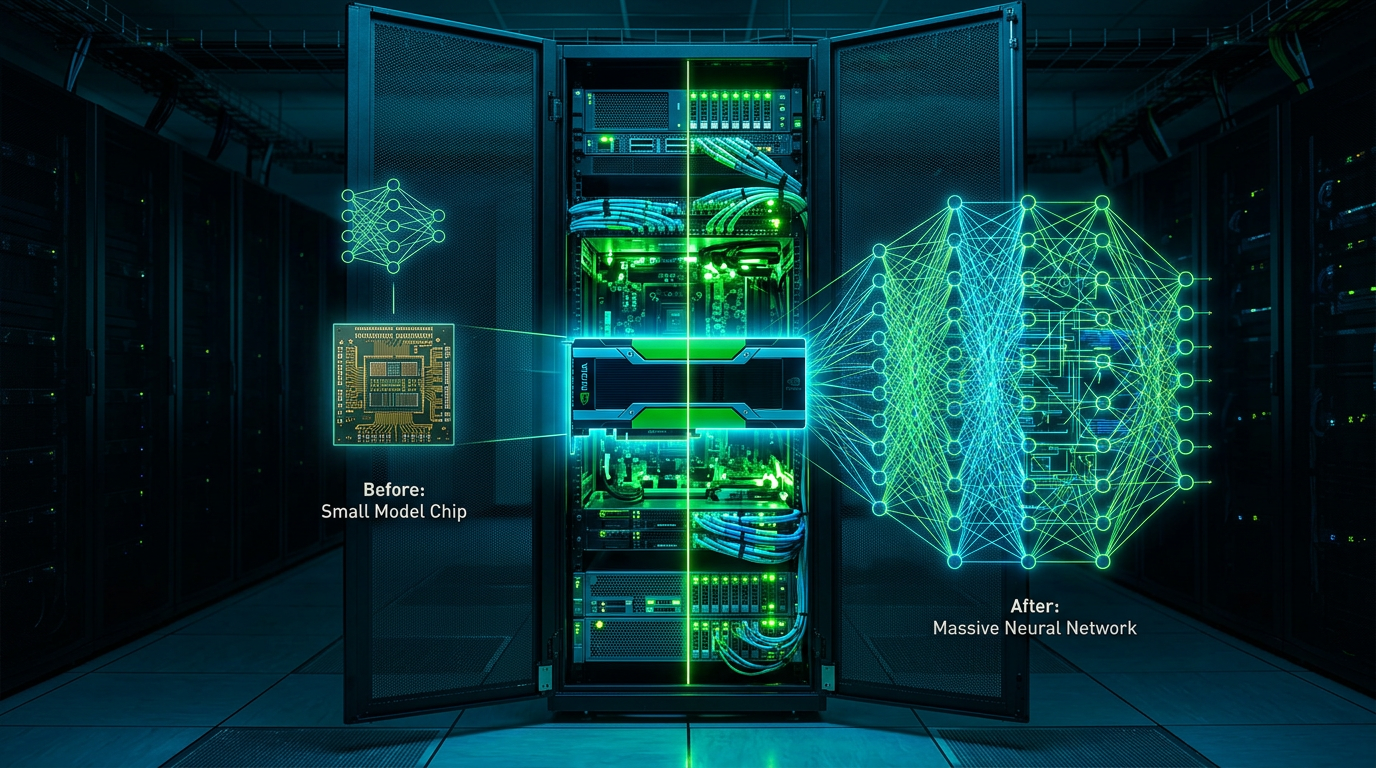

The MoE Trick

Qwen3-VL-30B-A3B is a Mixture-of-Experts (MoE) model. Out of 30 billion total parameters, only 3 billion are active for any given token. The model has 128 expert modules and routes each token through just 8 of them.

This means the GPU only needs to hold the active experts and the shared attention layers — roughly 3-4GB of VRAM per forward pass. The remaining experts sit in CPU RAM and get swapped in as needed.

The key flag: --n-cpu-moe 35. This tells llama.cpp to keep 35 expert modules on the CPU, leaving only the active ones on the GPU. On our P40 with 24GB VRAM, we use just 13.2GB — leaving half the VRAM free.

TurboQuant: 256K Context Without Flash Attention

The P40 is a Pascal GPU (compute capability 6.1, released 2016). It doesn’t support Flash Attention, which most modern cache compression techniques require. This used to limit us to short context windows.

A community fork called TurboQuant changed that. It adds turbo4 and turbo3 cache types that compress the KV cache without Flash Attention. The result: 256K token context windows on hardware that technically shouldn’t support them.

The fork is built from source targeting sm_61 (Pascal compute capability). It’s available at github.com/TheTom/llama-cpp-turboquant.

Vision Support

The VL variant includes a vision encoder (mmproj) that adds image understanding. We use it for analyzing screenshots and diagrams, processing document scans, and visual QA for business reports. The vision encoder uses about 360MB of VRAM — negligible on the P40.

Updated Performance

Metric | Old (Llama 3 8B) | New (Qwen3-VL-30B-A3B)

- Parameters: 8B → 30B (3B active)

- Model size: 4.9 GB → 17 GB

- Generation speed: ~30 tok/s → 13.8 tok/s

- Prompt speed: ~755 tok/s → 144.5 tok/s

- Context window: ~32K → 256K

- Vision: No → Yes (mmproj)

- VRAM used: ~6 GB → 13.2 GB

Yes, generation speed dropped from 30 to 13.8 tokens per second. But we gained a model with 4x the parameters and significantly better reasoning, vision capabilities, and 8x larger context window — still only using 55% of the GPU’s VRAM.

The Updated Docker Command

image: llama-cpp-turboquant:server-cuda command: ["--parallel", "1", "--n-cpu-moe", "35"] environment: LLAMA_ARG_CTX_SIZE: "262144" LLAMA_ARG_CACHE_TYPE_K: turbo4 LLAMA_ARG_CACHE_TYPE_V: turbo3 LLAMA_ARG_N_GPU_LAYERS: "999" LLAMA_ARG_THREADS: "8"

The key differences from the original setup:

- Custom TurboQuant image instead of official llama.cpp (required for Pascal GPUs)

- --n-cpu-moe 35 offloads MoE experts to CPU (the trick that makes 30B fit in 24GB)

- turbo4/turbo3 cache compression enables 256K context without Flash Attention

What This Means for Self-Hosted AI

The original argument was: self-hosted AI costs a fraction of cloud alternatives and gives you more control.

This update strengthens it. The same €180 GPU that was running an 8B model six weeks ago is now running a 30B vision-language model with 256K context. No hardware upgrade. No new spend. Just better software techniques — MoE offloading and TurboQuant cache compression — applied to the same silicon.

The open-source community moves fast. If you own your infrastructure, you capture that velocity. If you rent it, you wait for your vendor’s roadmap.

The cost hasn’t changed. The capability has multiplied.

— Opteia builds self-hosted AI infrastructure for SMEs. If you’re considering running AI on your own terms, book a free consultation.

Want AI working for your business?

Book a Free Consultation Opteia

Opteia